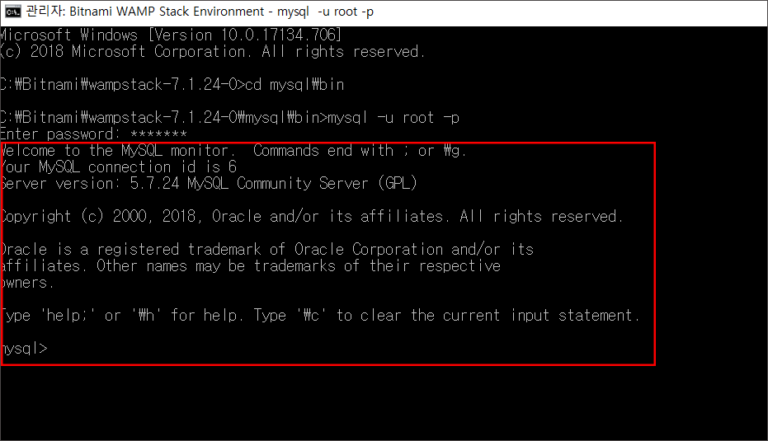

This reduces friction, simplifies the work of DevOps teams and avoids the "it works on my machine" syndrome.īitnami stacks provide a solution to this problem. This means that individual developers working on the application should use the same development environment, and development, production and test environments should also be consistent with each other. If the directories /bitnami/data and /opt/bitnami/kafka/logs are not using local storage, I guess you are using any other kind of storage, you should be able to update the capacity if needed.When developing applications, having consistent server environments is key. It will also probably depend not eh requests done to the Kafka brokers. In the case of the logs, there will be more data stored the longer Kafka runs. It is difficult to predict which should be the size of the storage. To consume those logs you can use the approach of your preference, for example, you could use Logstash.Ĭlarification: the pod uses local node storage for all but these directories: data -> /bitnami/kafka and logs /opt/bitnami/kafka/logs The logs are stored to avoid losing them when a pod is restarted. What if anything is kept on the root disk? how big should be the root disk? does its usage increment? can I configure a fail safe?Ĭould you please clarify this too? What do you mean with the root disk?Ĭlarification: if logs are flushed to storage can they be retrieved by a consumer or consumer group? Mainly the logs if configured, to check if it fits your needs you can try deploying the docker-compose and checking that directory inside the containers. What is kept in "persistence" /data ? does its usage increment? if it increments, can I configure a fail safe? I am not sure what do you mean by this, do you mean getting the volume out of space? You can use the logPersistence.size and in case it gets out of space you will need to redimension de volume.ĭoes this happen if a client asks for topic messages from the an early offset and cause latency or failure? If so, what are some strategies to recover? If you don't provide that flag the logs will be sent to stdout only. clear old messages from storage if it nears being exhausted. I do not yet know if there is a configurable Kafka setting to avoid the crash i.e.

Naturally these directories become full and can cause a crash. Persistence mapped to /bitnami/kafka/data/ directoryīy default Kafka server logs are sent to stdout and can be configured to be stored in logPersistence mapped to /opt/bitnami/kafka/logs I have learned through observing the directories in the Kafka pods thatīy default the Bitnami Helm chart persists messages to How do I retrieve logs that are persisted? Can a consumer ask for topic messages from an early offset and cause latency or failure? If so, what are some strategies to recover? configure Kafka to retain everything and delete old log files if quota is exceeded?

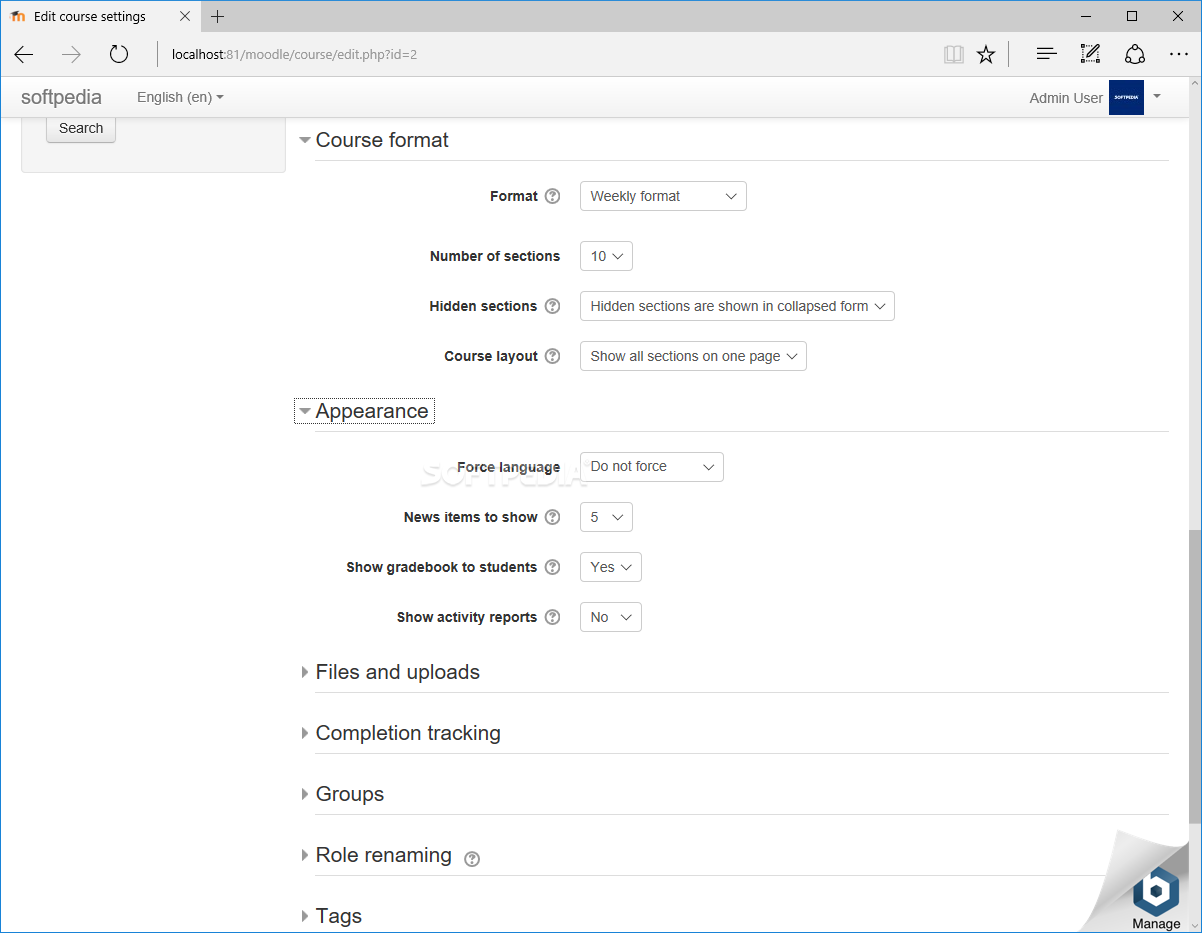

What happens if logPersistence is exhausted?Ĭan I configure a fail safe i.e. LogPersistence is enabled in helm chart by me to retain logs, in an attached volume provisioned by the Helm chart unless I provide an alternative (which I don't). The Helm chart stateful set has these volumes: volumeMounts: From what I understood from the documentation logs are chunks of messages in a topic, when configurable quotas of bytes, messages or time, are exceeded, the logs get flushed to files in storage. I find the documentation storage and persistence allocation, very confusing. I deploy Bitanmi Kafka Helm chart on AWS provisioned with Terraform.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed